Pedagogical innovation vs. content reduction: a distinction worth defending.

The word innovative has been carrying a great deal of weight in undergraduate science curriculum conversations. Some of what travels under the label is rigorously evidence-backed and transformative. Some of it is content reduction with a marketing budget. The two should not be discussed as if they were the same thing.

A great deal has changed for the better in undergraduate science teaching over the past two decades. Active-learning techniques, peer instruction, process-oriented guided inquiry learning, team-based learning, and high-structure course design have moved from experimental fringe to mainstream practice, and the evidence supporting them — published in PNAS, in CBE—Life Sciences Education, in Advances in Physiology Education — is among the most robust evidence base in higher education research. These methods deserve the energy and resources institutions have put behind them.

Less-helpfully, the success of that evidence base has produced an adjacent phenomenon: an expanding category of curricular changes that borrow the language of pedagogical innovation without sharing its methods or its evidence. The label travels easily; the underlying rigor does not. This is not a complaint about novelty. It is a request that we apply the same evidentiary standard to curriculum changes called "innovative" that we would apply to any other claim about student learning.

The cost of failing to make this distinction is not abstract. It gets paid by students who arrive at downstream programs missing competencies their transcripts say they have, and by faculty in those downstream programs who must spend the first weeks of their courses backfilling material that was reduced under the wrong label.

What evidence-backed pedagogical innovation actually looks like

A short, generous tour of the real thing is worth taking before the rest of the argument lands. Each of the pedagogies below has peer-reviewed effectiveness data, replicated across institutions and disciplines, with effect sizes that hold up under meta-analytic scrutiny. None of them claims to be a panacea. All of them have moved from experimental fringe to mainstream practice on the strength of their evidence.

- Active learning, broadly construed. The Freeman et al. 2014 PNAS meta-analysis — 225 studies, tens of thousands of students — found examination-score gains roughly half a standard deviation higher in active-learning sections than in traditional lectures, with failure rates 55% lower.1

- Peer instruction, originating in Mazur's physics work and replicated across biology, chemistry, and engineering. The technique is structurally simple — conceptual question, individual answer, peer discussion, revote — and the gains in conceptual understanding are consistently large.2

- POGIL (Process Oriented Guided Inquiry Learning), structured collaborative work in which students construct understanding from carefully designed inquiry sequences. Outcome studies in chemistry and biology report improved retention and reduced equity gaps relative to traditional instruction.3

- Team-based learning (Michaelsen and colleagues), structured small-group work with built-in individual and team accountability. Widely adopted in health-professions education, with documented effects on critical thinking and content mastery.4

- High-structure course design, which combines frequent low-stakes assessment, guided pre-class preparation, and explicit metacognitive support. Eddy and Hogan showed it substantially closes the achievement gap for historically underserved students without lowering the bar.5

Each of these innovations either keeps content constant and improves comprehension, or modifies content in carefully tested ways with documented outcomes. None of them rest their case on the word innovative. They rest their case on data.

Each of these innovations either keeps content constant and improves comprehension, or modifies content in carefully tested ways with documented outcomes. None of them rest their case on the word "innovative." They rest their case on data.

Where the imitation pattern shows up

The harder question, and the one this essay exists to ask, is where curricular changes adopt the language of evidence-backed innovation without sharing its substance. The pattern is rarely intentional, and it is rarely accompanied by bad faith. It is usually a side effect of an attractive label travelling faster than the methodology that earned it the label in the first place.

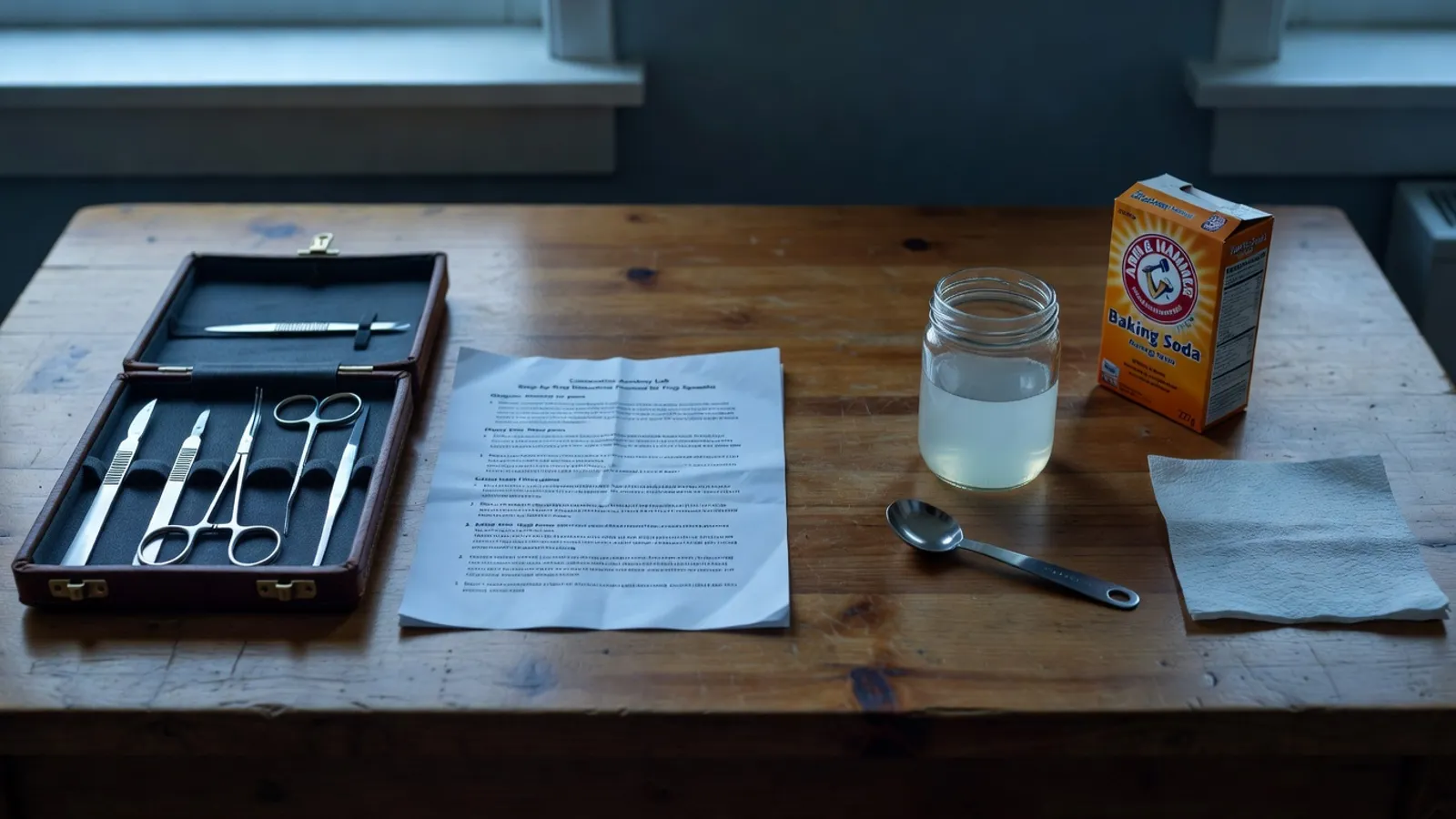

A non-exhaustive set of examples, deliberately abstracted from any specific institution: gateway-course “modernization” that replaces discipline-specific content with general study-skills activities, on the argument that study skills are more transferable; lab-replacement projects that substitute household materials for tasks previously requiring professional instruments, on the argument that the simpler version teaches “the same concept”; capstone assignments that substitute reflective writing for procedural demonstration, on the argument that reflection deepens learning. Each of these can be valuable in the right context. The pattern that distinguishes them from evidence-backed innovation is not their shape; it is the absence of an answer to the question “what does the evidence say this produces, in this context, at this scale?”

-

01

What construct does this change improve?And how, specifically, is the improvement measured?

-

02

What peer-reviewed evidence supports the claim?Replicated outcomes in comparable settings, not anecdotes.

-

03

Does the change preserve, reduce, or transform content?Innovation that reduces content under another name is still content reduction.

-

04

How will we know in 2–3 years whether it worked?Outcomes-tracking plan attached, or proposal returned for revision.

-

05

Who bears the cost if the change didn’t work?Usually the students, two cohorts later. Worth naming explicitly.

Why the conflation is expensive

Treating the two categories as one carries three distinct costs, which compound on different timescales:

- Reputational cost to genuine innovation. When weak imitations adopt the label, the label loses meaning and faculty become — understandably — skeptical of subsequent proposals. The next evidence-backed reform has to spend its initial credibility refuting the previous unbacked one.

- Cost to students. Reduced content is felt downstream by students who took the course in good faith, often years later, in the program that expected them to arrive with what the catalog said they would have. Backfilling that content is the receiving program's burden, not the sending program's, and the students absorb the gap in between.

- Cost to institutional learning. When the outcomes of a curriculum change are not measured, the institution cannot learn from the change. The next change is then no better-informed than the last, and the cycle continues. This is the cost most often missed in the moment, because the absence of measurement is itself unmeasured.

The question is not whether to innovate. The question is whether we are holding ourselves to the same standard of evidence we would expect of any other claim made about how students learn.

How a curriculum committee can tell the difference

The criteria are practical and do not require specialized training. A committee can apply them, quickly, to any proposed change: the five-question checklist above; the presence or absence of an outcomes-tracking plan; whether the proposal cites peer-reviewed evidence or only anecdotal success stories from early adopters; whether the change has been piloted at scale elsewhere with measurable outcomes that bear on the proposed context.

The 2015 National Academies report on undergraduate science instruction makes a similar recommendation in formal language: curriculum decisions in evidence-rich domains should be held to the standard of evidence available in those domains, not to the weaker standard implied by the word “innovation” alone.6 That is not a high bar. It is the bar a curriculum committee would apply to any other claim about how students learn.

A practical implication

The recommendation is procedural, not philosophical. Every proposed curriculum change — especially in courses with downstream credentialing weight — should arrive with two documents attached: a one-page evidence brief, summarizing what the relevant literature says about changes of this kind in comparable contexts; and a one-page outcomes-tracking plan, naming what the program will measure to find out whether the change worked, and on what timeline.

Proposals that cannot supply both are not yet ready for committee review. This is not a barrier to innovation. It is the discipline that distinguishes innovation from its imitations, and the only one that survives committee turnover. The benefit accrues to the proposals that genuinely have the evidence: they clear committee faster, with stronger institutional support, because they brought what the standard required. The proposals that don't have the evidence get a useful answer of a different kind — an answer about what evidence would be required before the change is wise.

References & further reading

- Freeman, S., Eddy, S. L., McDonough, M., Smith, M. K., Okoroafor, N., Jordt, H., & Wenderoth, M. P. (2014). “Active learning increases student performance in science, engineering, and mathematics.” Proceedings of the National Academy of Sciences, 111(23), 8410–8415. doi:10.1073/pnas.1319030111. The largest meta-analysis to date of active-learning effects in undergraduate STEM.

- Crouch, C. H., & Mazur, E. (2001). “Peer instruction: ten years of experience and results.” American Journal of Physics, 69(9), 970–977. doi:10.1119/1.1374249. See also Smith, M. K., et al. (2009), “Why peer discussion improves student performance on in-class concept questions,” Science, 323(5910), 122–124, for the biology replication.

- Walker, L., & Warfa, A.-R. M. (2017). “Process oriented guided inquiry learning (POGIL®) marginally effects student achievement measures but substantially increases the odds of passing a course.” PLOS ONE, 12(10), e0186203. doi:10.1371/journal.pone.0186203. Meta-analytic synthesis of POGIL effects across STEM disciplines.

- Burgess, A., van Diggele, C., Roberts, C., & Mellis, C. (2020). “Team-based learning: design, facilitation and participation.” BMC Medical Education, 20(Suppl 2), 461. doi:10.1186/s12909-020-02287-y. A representative recent overview of team-based learning in health-professions education with outcomes summary; the originating treatment is Michaelsen, L. K., Knight, A. B., & Fink, L. D. (eds.) (2004), Team-Based Learning: A Transformative Use of Small Groups in College Teaching, Stylus.

- Eddy, S. L., & Hogan, K. A. (2014). “Getting under the hood: how and for whom does increasing course structure work?” CBE—Life Sciences Education, 13(3), 453–468. doi:10.1187/cbe.14-03-0050. See also Theobald, E. J., et al. (2020), “Active learning narrows achievement gaps for underrepresented students in undergraduate science, technology, engineering, and math,” PNAS, 117(12), 6476–6483.

- National Research Council. (2015). Reaching Students: What Research Says About Effective Instruction in Undergraduate Science and Engineering. Washington, DC: National Academies Press. doi:10.17226/18687.

Drafted May 2026.