Replacing bench work with paper exercises: a measurement problem.

Substituting a paper exercise for the bench work it is meant to replace is a reasonable thing to consider. It is also a hypothesis, and the transfer-of-learning literature has spent decades testing hypotheses of this form. The findings are not what we might wish them to be.

Suggestions to replace bench activities with paper exercises usually arrive in good faith. They tend to be motivated by real concerns: lab time is expensive, prep load is high, supply costs have risen, some students find the bench environment intimidating. Each of those concerns is legitimate and deserves a serious response.

The proposed solution — replace the bench task with a paper analog — rests on a specific empirical claim: that performing a paper analog of a task produces learning equivalent, or at least comparable, to performing the task itself. This is what the cognitive-science literature calls a transfer claim, and transfer is one of the most-studied questions in educational psychology. We do not need to speculate about whether the claim is true; the literature has tested versions of it for the better part of a century.

The short summary of that literature is that transfer from a simpler, abstracted task to a more complex authentic one is measurable but limited, and the limits are larger than intuition suggests. The practical implication is not that paper exercises are worthless — they often are valuable — but that they cannot, in general, do the work of the bench task they are meant to replace. Treating them as replacements introduces a measurement gap that becomes visible only later, often after students have moved downstream.

What “transfer” means in the learning-sciences literature

A short technical primer is worth pausing on, because the everyday meaning of “transfer” and the technical meaning are different in instructive ways. In the learning sciences, transfer is the application of learning acquired in one context to a different context. The literature distinguishes near transfer — where the new context closely resembles the original learning context — from far transfer, where the contexts differ substantially along multiple dimensions.

Near transfer is reliable and easy to demonstrate; if a student has learned to identify a structure on diagram A, they will generally identify the same structure on diagram B. Far transfer is the historically difficult case. The classic Detterman and Sternberg volume Transfer on Trial argued, on the basis of a substantial review, that far transfer is rare in laboratory studies and harder to engineer than intuition suggests.1 Barnett and Ceci's later taxonomy formalized the dimensions along which contexts can differ — physical, temporal, functional, social, modality, and knowledge-domain — and showed that transfer probability decreases as more dimensions diverge.2

Substituting a paper analog for a bench task is, in this framework, a far-transfer case. The contexts differ in physical modality (paper vs. specimen), in functional structure (recall vs. perceptual identification + procedural execution), and often in social arrangement (individual vs. paired work). The relevant empirical question is not whether transfer happens at all — some always does — but how much, on which sub-skills, and under what conditions. The answers, taken seriously, are uncomfortable for the substitution case.

What the substitution actually preserves

It is worth being specific about what paper analogs do retain, because the case for the substitution is not zero. A well-designed paper exercise can support declarative knowledge of structures or procedures named on the page. It can train recognition of correct sequences when the steps are listed and the student is asked to order or identify them. It can build the ability to label a diagram — sometimes more efficiently than the bench, since the labeled diagram is the test condition.

These outcomes are real and worth teaching. They are also, notably, what the lecture portion of the course already teaches. Substituting paper for bench in the name of efficiency does not add a new instructional channel; it adds a second channel for the same construct already covered by the first, while removing the channel that covered something different.

What the substitution measurably loses

The four bench-dependent learning categories laid out in essay 01 — tactile and procedural memory, decision-making under genuine uncertainty, the find-the-thing perceptual gap, and social calibration on a shared task — do not survive the paper substitution. None of them. Procedural memory requires repetition with the actual instrument; uncertainty requires an actual unfamiliar specimen; perceptual identification requires unlabeled real exemplars; social calibration requires a shared physical referent and a partner to coordinate around.

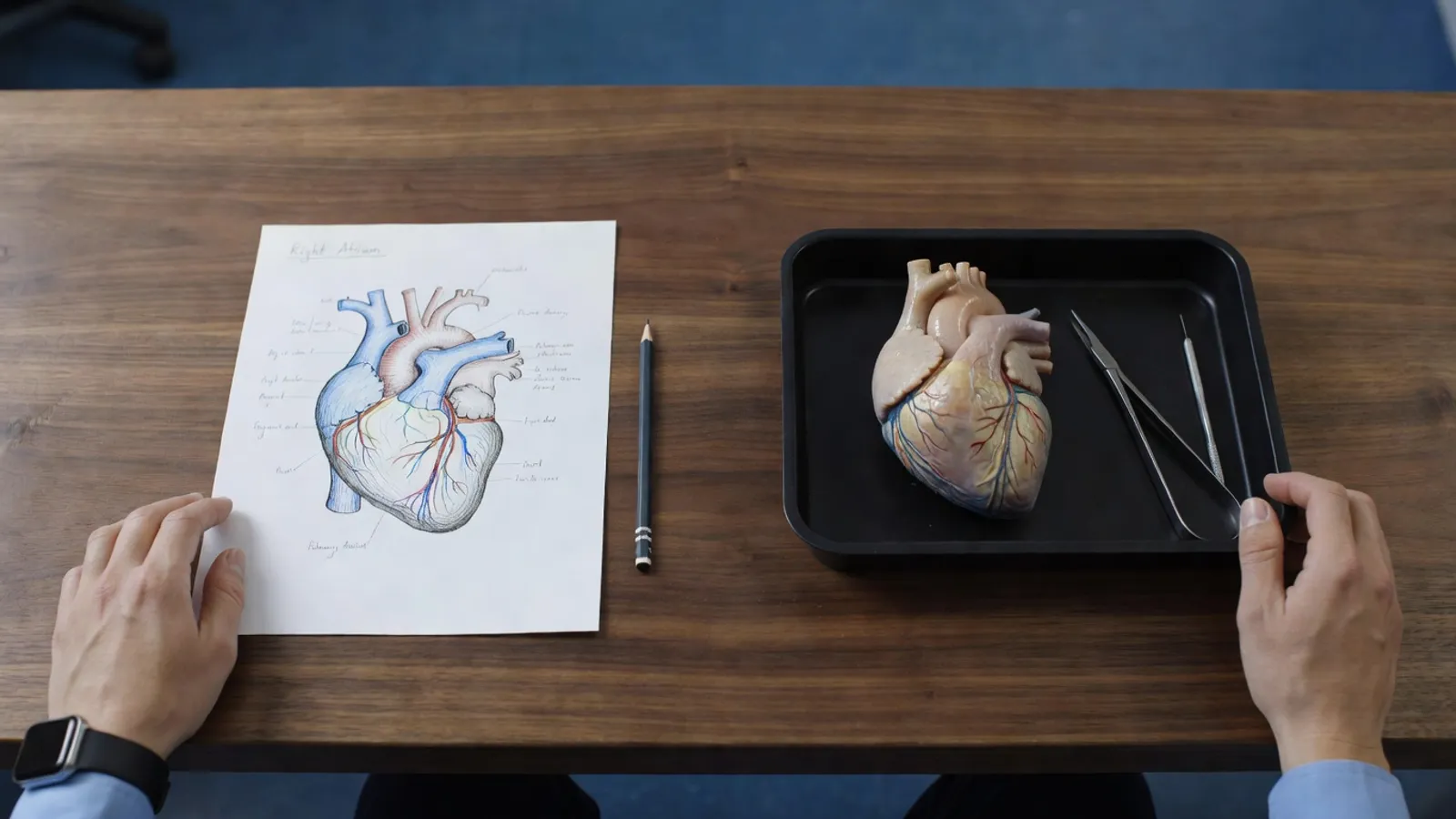

Each of these losses has a direct downstream consequence in clinical practice. The simulation-based medical education literature has documented the pattern repeatedly: students whose training relied on paper or video proxies for procedural skills underperform on subsequent applied measures, even when their declarative knowledge is equivalent.3 The figure below summarizes which constructs survive the substitution and where the gaps tend to surface.

| Learning category | Bench task | Paper analog | Where the gap shows up |

|---|---|---|---|

| Declarative knowledge | Yes | Yes | No gap — either works |

| Diagram recognition | Yes | Yes | No gap — either works |

| Procedural memory | Yes | No | First clinical procedure |

| Decision under uncertainty | Yes | No | Atypical patient presentation |

| Perceptual identification | Yes | No | Specimen / chart / scan |

| Social calibration | Yes | No | First clinical team |

The latency problem

A subtle but important methodological point: the measurement gap created by paper substitution often does not appear in end-of-course assessment scores, especially if those assessments are themselves paper-based. The student who learned procedural steps from a worksheet can answer a multiple-choice question about those steps perfectly well. The gap appears later — in the clinical rotation, in the first job, in the patient-care setting where procedural execution and decision-under-uncertainty are what the role actually requires.

The transition-to-practice literature in nursing has documented this pattern at scale. Kavanagh and Szweda's national study of new-graduate nurses found that fewer than one in four entered practice with the level of clinical-judgment competency expected for safe practice, even though the same graduates had passed their academic coursework and the NCLEX.4 Casey, Fink, and colleagues' graduate-nurse experience surveys report a similar lag between credentialed competence and applied competence in the first six to twelve months of practice.5 The gap is not invisible; it is delayed, and the curriculum committee that decided on the substitution has, by then, usually moved on to other questions.

The measurement gap is not invisible. It is delayed. The difference matters because the curriculum decision and the consequence of the decision are decoupled by years.

Where paper substitution is defensible

The argument above is a case against substitution — not a case against paper exercises. The two are different, and the difference matters. There are several conditions under which paper-based work is genuinely the right tool, and an essay that ignored them would be worth less than one that named them.

Paper exercises are excellent for pre-lab preparation, where their function is to bring the student into the lab session ready to spend the bench time on the procedural and perceptual work, not on memorizing names. They are excellent for moving rote memorization off the bench, freeing the bench hour for tasks that require it. They are excellent for assessing declarative knowledge specifically, when that is the construct the assessment is designed to measure. And they are essential for accommodation: students who cannot attend a particular lab session for documented reasons need a defensible alternate path, and paper-based work is often the right one.

The principle that organizes these cases is straightforward. Paper exercises are excellent complements to bench work. They are weak substitutes for it. The two roles look superficially similar — both involve students doing paper-based tasks — but they are different decisions about what the program is trying to teach, and they have different downstream consequences.

What this implies about cost-driven substitution decisions

The motivation for substituting paper for bench is, in honest conversation, almost always cost. Bench time is expensive. Prep load is high. Supplies and consumables have risen in price. Sections compete for limited lab space and limited instructor coverage. The cost concern is legitimate, and dismissing it is neither fair nor effective.

The analytical move that follows from the argument above is to ask whether the cost can be addressed without substituting away the construct. Several routes are usually available before the substitution becomes the only remaining option: better scheduling to use bench time more efficiently; supplies and consumables review; cross-section standardization to reduce prep load per offering; pilot reductions tested against measurable outcomes before broad rollout; and where the reduction is necessary, careful selection of which bench tasks can be reduced (the ones serving fewer of the four learning categories) versus which should be defended (the ones serving several at once).

A practical implication

For any program weighing paper substitution for a bench activity, three questions are worth answering honestly before the change is made:

- What construct does the bench task measure that the paper analog does not? (If the answer is "nothing," the substitution is fine.)

- How will we detect, in the next 1–3 years, whether the substitution has produced a downstream gap? (If the answer is "we won't," that is itself the answer.)

- What is the cost saving, expressed honestly, against the measurement loss? (Sometimes the saving is real and the loss is small. Sometimes not.)

A program that can answer these three questions clearly is making a well-founded decision either way. A program that cannot is making one anyway.

References & further reading

- Detterman, D. K., & Sternberg, R. J. (eds.) (1993). Transfer on Trial: Intelligence, Cognition, and Instruction. Norwood, NJ: Ablex. The volume that crystallized the modern transfer-skepticism position, with substantial review of why far transfer has been hard to demonstrate empirically.

- Barnett, S. M., & Ceci, S. J. (2002). “When and where do we apply what we learn? A taxonomy for far transfer.” Psychological Bulletin, 128(4), 612–637. doi:10.1037/0033-2909.128.4.612. The standard taxonomic framework for thinking about transfer distance along multiple dimensions.

- McGaghie, W. C., Issenberg, S. B., Cohen, E. R., Barsuk, J. H., & Wayne, D. B. (2011). “Does simulation-based medical education with deliberate practice yield better results than traditional clinical education? A meta-analytic comparative review of the evidence.” Academic Medicine, 86(6), 706–711. doi:10.1097/ACM.0b013e318217e119. The meta-analytic evidence that hands-on practice with deliberate practice protocols outperforms equivalent instructional time spent on knowledge-based or paper-based training for procedural skill outcomes.

- Kavanagh, J. M., & Szweda, C. (2017). “A crisis in competency: the strategic and ethical imperative to assessing new graduate nurses' clinical reasoning.” Nursing Education Perspectives, 38(2), 57–62. doi:10.1097/01.NEP.0000000000000112. The national study reporting that approximately 23% of new graduate nurses entered practice with the clinical-reasoning competency expected for safe practice.

- Casey, K., Fink, R., Krugman, M., & Propst, J. (2004). “The graduate nurse experience.” Journal of Nursing Administration, 34(6), 303–311. doi:10.1097/00005110-200406000-00010. The originating study for the Casey-Fink Graduate Nurse Experience Survey, used widely in transition-to-practice research to track the lag between credentialed and applied competence in the first year of practice.

Drafted May 2026.